In case you missed it, we just launched a new website to be the new home for all of our major publications and resources. The AI Assessment Scale has already been used and adapted by hundreds of education providers in K-12, higher education, and adult education worldwide, as well as industry adaptations and 30 translations.

The AI Assessment Scale is an idea, and we believe it is important that ideas are shared freely. That’s why, since the first publication, all of our resources regarding the AI Assessment Scale have been published under Open Access Licenses. You can find, make copies of, and edit all of the current AI Assessment Scale resources at this link to our CC BY-NC-SA 4.0 licensed materials, because ideas are open to everyone.

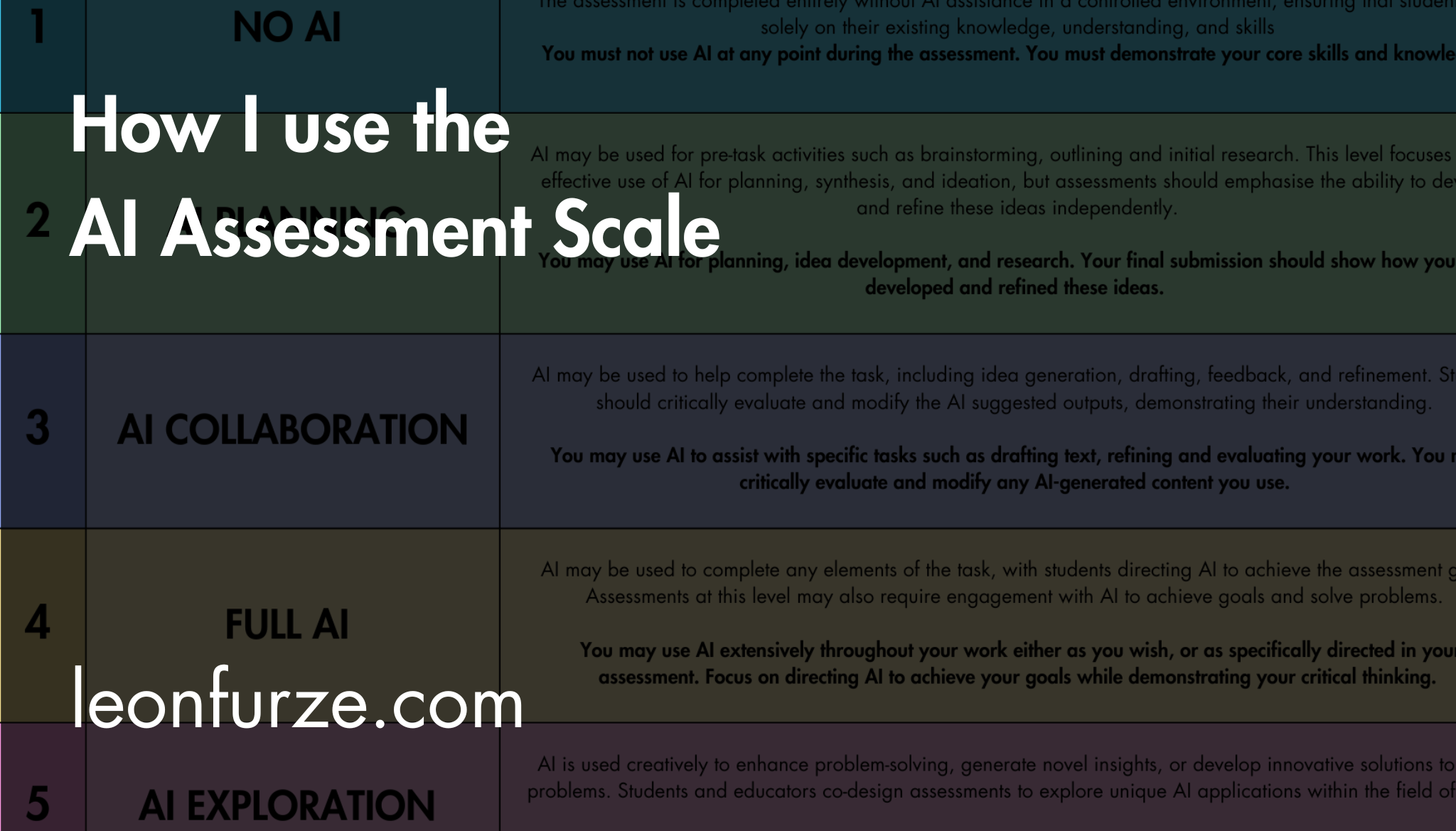

We have seen many great interpretations of the AI Assessment Scale. In this post, I discuss how I would implement the AIAS if I were responsible for rolling it out in a faculty or organisation. I have also checked in with co-authors Mike Perkins and Jasper Roe on this article, since we each have our own interpretations and examples.

This article is not a complete “how-to” guide, but it does articulate a lot of the thinking behind the scale, and provides examples of how I would personally apply it.

The Rationale for the AI Assessment Scale

We have written about this in each of our major publications, but at its core, the AI Assessment Scale developed from conversations with educators about the need for more than a binary “use or don’t use” approach to GenAI.

In 2023 we understood that both educators and students were looking for advice and support for using AI appropriately across various disciplines. So, I wrote the original AI Assessment Scale blog post with written assessments in mind and based on my experience of 15 years teaching secondary and tertiary English, literature, and literacy.

The rationale to the AI Assessment Scale is this: While we are coming to terms with this technology, both educators and students need support in understanding where and how these technologies might be useful, or where they might be best avoided. One way to provide that support is by breaking assessment task types into common categories familiar to a range of disciplines.

These categories are the levels of our scale.

In version two of the scale, certain other needs became apparent. Among these, the most important was the need for transparency and discourse with students. The AI Assessment Scale aims to help educators and students have transparent discussions about what they believe are appropriate and inappropriate uses of artificial intelligence.

Finally, we see the AI Assessment Scale primarily as an assessment design tool where educators who are experts in their disciplines can review their existing and forthcoming assessments in light of GenAI and make judgements based on their subject knowledge and an understanding of the strengths and limitations of the technology regarding the best use (or non-use) of AI in a given context.

To sum up, my personal “core principles” regarding the AI Assessment Scale are:

- It reflects the need for a more nuanced understanding beyond a dichotomous “use or don’t use” approach

- It facilitates transparent communication between teachers and students about what is appropriate and useful and why

- It is primarily an assessment design tool to be used in the process of discussing, creating, and updating assessments with GenAI in mind

What The AIAS is not

The flip side of this discussion is “what the AIAS is not“. Again, the AIAS is just an idea, and you are free to interpret it however you like. These are my interpretations of what the AIAS is not:

An assessment security instrument

The AI Assessment Scale is not a tool for assessment security. Beyond our recommendation that Level One (No AI) assessments are conducted technology-free where possible, we do not make any recommendations regarding assessment security. We acknowledge this in version two, where we said that from levels two to five, any use of artificial intelligence essentially permits all use of artificial intelligence.

This is because there is no way to reliably detect AI use. Therefore it is impossible to say to a student, “this is a level two task, you may use AI for planning, but you must then promise to stop.” Adequate assessment design choices, especially at level two, would help to support this, but nothing can guarantee a student won’t use AI beyond your guidelines.

In our original incarnation, the AI Assessment Scale had traffic light colours implying stop, slow, go. Our deliberate decision to move away from those colors supports our constantly evolving understanding of the reality of these technologies. I explained those decisions in full in my article, “Why We’ve Driven Through the Traffic Lights,” and we discuss it in our version two updates.

Personally, I am not a fan of the term “assessment security”. My background in K-12 probably has something to do with this, since the term appears to be far more common in Higher Education. It is a term which brings to my mind images of surveillance and adversarial behaviours. However, I have had many conversations with assessment security experts in Australia and they are generally opposed to surveilling technologies, heavy-handed “catch and smack” methods, and other forms of “security theatre“.

The point is that neither I nor the other authors see the AIAS as a tool for assessment security: you cannot simply show the scale to a student and hope that they will not break your trust.

A shopping list of ideas

The AI Assessment Scale is designed to support educators working across a range of disciplines and areas of education including in K-12, vocational education, and higher education. This means that we try to be supportive of different assessment tasks and types, whilst avoiding being overly prescriptive on uses of GenAI. We also know that GenAI has changed rapidly over the past few years, and any recommendations that we make over particular apps or approaches will quickly become redundant. I personally believe “prompt engineering” and the use of chatbots as tutors are both short-lived applications of GenAI.

As such, the AI Assessment Scale is not a shopping list of ways to use GenAI. I understand the appeal of providing educators with lists of example prompts or potential use cases such as role playing, critique, spelling, grammar and punctuation, and so on, but I believe that the best way to learn how to use the technology is through experimentation within a discipline.

In the past, I have published resources along those lines myself, including the very popular Practical Strategies for AI series that later became the basis for my book. Whilst these form part of professional learning for educators, I do not think they are helpful in a framework like the AI Assessment Scale, since there are far too many and varied potential uses of multimodal GenAI across different disciplines, and we are not experts in every discipline.

Instead, the AI Assessment Scale suggests that the disciplinary experts – the educators working alongside the students – should be ultimately responsible for determining how GenAI is or is not used in the context of their subjects’ assessments. However, this must be done with sufficient professional learning and support to understand the strengths and limitations of GenAI so that staff are not working based on opinions or conjecture about what the technology can or can’t do.

There must be a thoughtful balancing of domain expertise and technological expertise. We cannot simply throw a list of prompts at educators and call the job done.

A benchmark

In a similar fashion to the comment on assessment security, I do not see the AI Assessment Scale as a benchmark for students, for example, “at level 2, 20% of your assessment must use AI.” Other than level five, where we say the student should use AI creatively, I would personally recommend against making the use of AI a criteria for the assessment.

Given the contentious nature of the technology and the fact that some students may object to its use on moral or ethical grounds, I never recommend the AIAS is used as an imperative, e.g., “you will be assessed on your use of AI as a research tool,” unless the assessment in question comes from a discipline where artificial intelligence is a required syllabus outcome.

At levels 2-4, I don’t think there is a need to require AI use unless you are specifically teaching or using a particular application, in which case that application must be accessible and equitable.

As far as I’m aware, there aren’t many courses yet that relate to the use of large language models and related technologies on such a specific level. In workshops on the AIAS, I always encourage educators to remember to teach what you mean to teach, and assess what you have taught. If your course is not about teaching how to use ChatGPT, then your assessment should not have a criterion which judges students on their ability to write a prompt.

However, that does not mean the technology shouldn’t be explicitly taught. If you are redesigning an assessment at Level 2, you should be prepared to explore suitable AI applications with students in the same way that you would usually teach the recommended software, methods and approaches of any course. Again, this is where the need to balance technological and domain expertise comes into play.

So to sum up these thoughts, there are three things which I believe the AI Assessment Scale is not:

- It is not an assessment security tool and will not stop students from “cheating” or inappropriately using AI

- It is not a shopping list of prompts or methods to use AI

- It is not a benchmark for “how much AI to use” or a criterion unless it is a necessary part of the task

How I Use the AI Assessment Scale: Step-by-step

In a future post, I will move on to how I use the scale in practice when I work with faculties in K-12 and Higher Education, and when I work with students. Again, there is some variance between myself, Mike and Jasper, due to the nature of our different teaching experiences. That is fine: the AIAS is designed with exactly that kind of flexibility in mind.

I will explain how I would use the AI Assessment Scale at an organisational level, a faculty level, and an individual teacher level.

Here’s a taster of some of the key ideas in the upcoming post:

- Assessments should be broken down into multiple tasks, assessed formally and informally over time

- Some assessments should permit or explore the use of AI, but only when it does not get in the way of the learning outcomes

- You must to be clear on what the learning outcomes are before making the judgement of whether AI gets in the way

- Every assessment cannot be at “Level 1: No AI”. This is unfair to students, unrealistic, and creates an administrative overhead for educators.

Between now and then, I’d encourage you to look around and see how others are using the scale. For instance, Chevalier College in NSW recently shared an example of how they’re bringing together University of Sydney’s Two Lanes approach and the AIAS:

Subscribe to the mailing list for updates, resources, and offers

As internet search gets consumed by AI, it’s more important than ever for audiences to directly subscribe to authors. Mailing list subscribers get a weekly digest of the articles and resources on this blog, plus early access and discounts to online courses and materials. Unsubscribe any time.

Want to learn more about GenAI professional development and advisory services, or just have questions or comments? Get in touch:

Leave a Reply