For a while now, I’ve been wrestling with a question that keeps resurfacing in my work with schools, my PhD research, and my own day-to-day experience with AI: what is the point of human experts when AI can produce increasingly competent outputs across virtually every domain?

I’ve written about expertise and AI before. I’ve argued that you need expertise to use AI well, and that when students skip the productive struggle of developing that expertise, the consequences run deep. But I’ve been circling something I haven’t quite named until now. Something about what experts actually do that AI can’t, and why it matters not just for the individual expert but for everyone around them.

I’m calling it Expert Signals.

What AI can and can’t do with expertise

I’ve been using Claude Code and Claude Opus 4.6 to build things: the Teaching AI Ethics website, various dashboards, little widgets and tools. And as with any use of these technologies, what I find is that the upper bounds of my own expertise are really the upper limit of what I can push the technology to do.

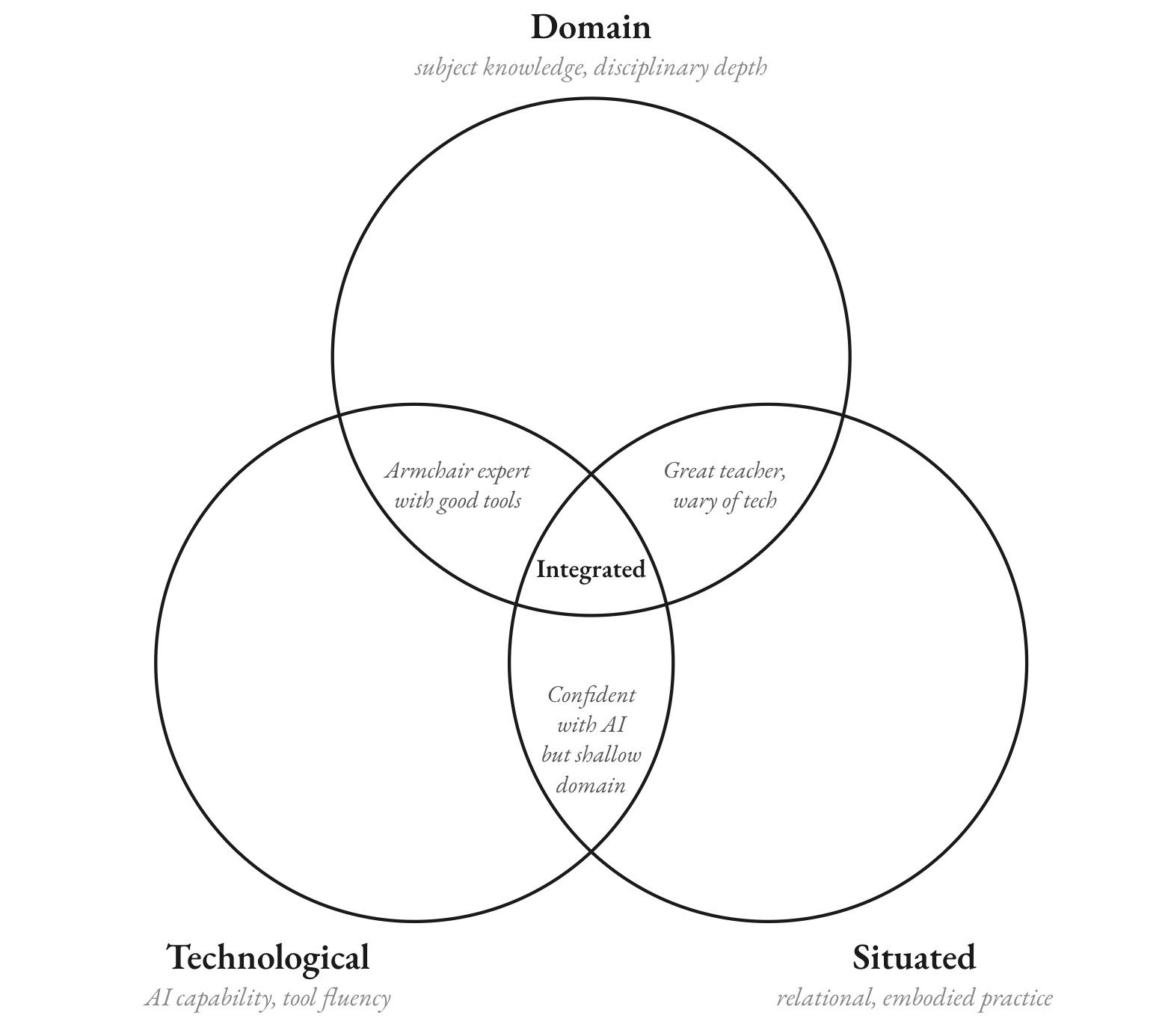

That expertise is, as I’ve argued before, three-dimensional: domain expertise (what I know about my subject), technological expertise (how I work with AI), and situated expertise (the contextual, relational, hard-won knowledge that develops over years of practice).

By any benchmark, models like Claude Opus 4.6 are capable of disciplinary expertise across multiple domains. But here’s the thing: AI can’t help me to really push the boundaries of my own expertise. It can help me execute within the bounds of what I already know. It cannot show me what I don’t know I’m missing.

Learning from an expert

Here’s an example from my own recent experience. Simon Willison is an accomplished software engineer. He’s highly skilled at using large language model technologies in software. Willison coined the phrase “prompt injection.” He popularised the term “slop.” He recently published a piece where he and two colleagues coined the term “deep blue” for the sense of ennui that software engineers feel when they see AI completing entire jobs for them. He is, by any definition, an expert in his field.

Every time I read one of his posts about using large language models, I learn something new, something I can take back and apply to my own practice, something I wouldn’t have learned by just fumbling around with a large language model by myself.

The article I have in mind comes from Willison’s guide to “Agentic Engineering”. He talked about the importance of testing in the development process, about how in software development, testing often gets left to the end. He described red-green testing, where you go through every line of code, testing the failures first, then making sure every line gives you a green light. But it wasn’t just the technical knowledge that was valuable. It was his mindset, his approach, the kind of situated knowledge that comes from decades spent on the job.

This is knowledge you acquire. It’s domain expertise, but it transcends domain expertise. I could pick up a book and learn what all the software engineering acronyms mean. I could read the wikipedia entry on red-green testing. But I wouldn’t internalise that knowledge until I’d built software and spent a long time doing it, which, at this point in my life, is unlikely to ever happen.

When I spend time reading Willison’s article, I’m borrowing from his situated expertise and bringing it into my technological expertise. I’m learning something that AI, on its own, would not arrive at. If I go into Claude Code and start prompting, it’s not immediately going to follow Willison’s process. His approach is unique and personal to him, shaped by years of practice.

Expert signals

This is the idea I’m trying to capture: the role of the expert in distributing situated knowledge to others. I’m calling these transmissions expert signals, the moments when a human expert shares not just what they know, but a distillation of the sum total of how they came to know it; the insights that emerge from years of practice in a specific context, community, or discipline.

Expert signals are what you can’t get from a probability distribution of all the knowledge consumed during training. They are the product of what James C. Scott, in Seeing Like a State, calls metis: the practical, embodied knowledge that resists codification. Metis is the home cook who adjusts by taste. The gardener who knows when to plant by the feel of the soil. It’s the teacher who reads the room mid-lesson. It’s the software engineer whose gut tells him to front-load the testing.

Scott describes metis as,

“a wide array of practical skills and acquired intelligence in responding to a constantly changing natural and human environment…the mode of reasoning most appropriate to complex material and social tasks where the uncertainties are so daunting that we must trust our experienced intuition and feel our way.”

Metis resists simplification into deductive principles because the environments in which it is exercised are so complex and non-repeatable that formal procedures of rational decision making are impossible to apply.

AI is essentially a very sophisticated form of techne, the contrasting term for formal, codifiable, transferable knowledge. Techne is “organised analytically into small, explicit, logical steps” that can be transmitted through instruction. Sound familiar? That’s basically how we describe what a large language model does: pattern-match across enormous bodies of codified knowledge and produce outputs that follow logical, well-structured forms. AI excels at techne. It has no metis.

And metis is where expert signals originate.

Isn’t this just… good teaching?

Yes. That’s exactly what it is. The key part I’m identifying here is the role of the expert in distributing situated knowledge to others. Isn’t that just the definition of what a good teacher does? A good teacher doesn’t just provide domain knowledge. They share the sum total of their situated expertise. And every single teacher’s version of that is individual.

My approach to teaching literature is informed by everything I’ve done in my life. It’s informed by my undergraduate English and American Literature degree, which variously focused on counter-culture literature, Black poetry, and Victorian Gothic. That’s totally different to the expertise of a literature teacher who was primed on Elizabethan drama and Australian popular culture. Both are valid, both are rich, and both are completely beyond the reach of a language model, which is essentially a probability distribution of all of the knowledge it consumed during training. AI lacks the situated expertise to provide nuance and interest and to spark fantastic new ideas. It lacks the human ability to contextualise knowledge.

Distributed expertise in the AI age

So, where does this leave us and our “human” expertise?

I think what we’re going to need, more than anything else, is human experts who are passionate, beyond all reason, about their disciplines. This has to be true of teachers and students alike.

The reason I read Willison’s blog posts about software engineering, which is not my field, is because I am personally invested in the topic. It’s not because I want to be a software engineer. It’s because I want to learn something from an expert in software engineering which I can apply to my own day-to-day use of a technology. It’s because I want to learn from somebody who spent a lifetime working with software, and borrow just a fraction of their experience.

This is why I don’t believe AI is a terminal threat to critical and creative thinking or to expertise. But I do think there’s a paradigm shift happening. AI will increasingly become part of how we acquire domain expertise, the techne, the codifiable knowledge. What it can’t provide is the situated, contextual, deeply human metis that makes that knowledge meaningful. So expertise becomes distributed: AI does a really good job of content and domain knowledge, and humans do a really good job of situated expertise. The question is what happens at the interface.

How do students become experts? How do we ensure the next generation develops the metis that only comes from sustained, difficult practice? If the distribution of domain knowledge into the hands of AI changes the baseline, what does the expert of 30 years from now look like?

I don’t know the answer. I’ve written about the risks of students skipping the struggle, and those risks are real. But I also think there’s something to be gained if we get this right.

The celebration of expertise

In the end, this is what I keep coming back to: the role of human experts is more important than ever, because they can translate their situated, lived experience into fascinating, surprising, unique insights that push the boundaries of other people’s domain and technological expertise.

This isn’t a cult of celebrity. It’s not about flocking to thought leaders’ books and paying thousands to attend conferences. It’s a celebration of the everyday expert: the teacher whose situated expertise transforms a classroom, the nurse whose years of practice let them spot what the diagnostic AI misses, the tradesperson whose feel for materials goes beyond anything a manual can teach.

It’s also about creating communities of distributed experts, whether they’re ad-hoc communities or formal ones, communities of practice or action research groups, university-industry partnerships or school initiatives where expert teachers are put in the room with passionate students. All of these ideas flow together naturally. They’re all expressions of the same fundamental principle: expertise is most powerful when it’s shared, and the sharing requires the kind of situated, contextual, deeply human knowledge that no AI can replicate.

The question we should be asking isn’t “will AI replace experts?” It’s “how do we build systems that amplify expert signals?” Because the answer to that question determines whether AI makes us collectively smarter or just collectively more efficient at producing ordinary work.

Want to learn more about GenAI professional development and advisory services, or just have questions or comments? Get in touch:

Leave a Reply